The Phantom Faculty

The Grad Student’s Library

There is a moment, familiar to anyone who has tried to understand something hard, when you reach for a different book.

Not because the first book was wrong. Because it did something to your brain that wasn’t enough. You followed every line of Landau’s derivation of the Bloch equations — every step correct, every index contracted, every factor of \(\hbar\) accounted for — and at the end you could reproduce the result but you couldn’t see it. So you opened Thorne and there was a picture of the Bloch sphere and suddenly the precession was obvious, it was a rotation, of course it was a rotation, and you felt foolish for not seeing it before. Then you tried to code the simulation and discovered you understood neither the derivation nor the picture, because the code demanded you answer questions that the prose had floated past: what are the initial conditions? What is the time step? What happens at the boundary?

Three books. Three encounters with the same physics. Three different things happened in your brain. And the understanding — the real understanding, the kind that lets you use the physics for something new — lived in none of the three individually but in the space between them.

This is not a story about books. It is a story about modes of cognition — distinct ways that understanding can happen, each with its own strengths and its own characteristic failure. The great physicists didn’t just know different things. They thought differently. And when you read their work, or watch their lectures, or trace their methods, you are not absorbing information. You are temporarily entering a different way of thinking. The information is the vehicle. The cognitive mode is what transfers.

A thoughtful graduate student accumulates these modes the way a musician accumulates techniques — not by studying them abstractly but by encountering them in the work, feeling what each one does and doesn’t do, and gradually developing the judgment to know which one to reach for when. Some modes arrive through books. Some through lectures. Some through the terrible experience of debugging code at 3am when the simulation produces numbers that agree with nothing.

What follows is a field guide to these modes — drawn from the actual experience of learning physics, not from pedagogical theory. We’ve identified thirty-one distinct spirits, organised by the kind of thinking they embody. Not all of them are physicists. Not all of them are teachers. All of them changed how someone thinks.

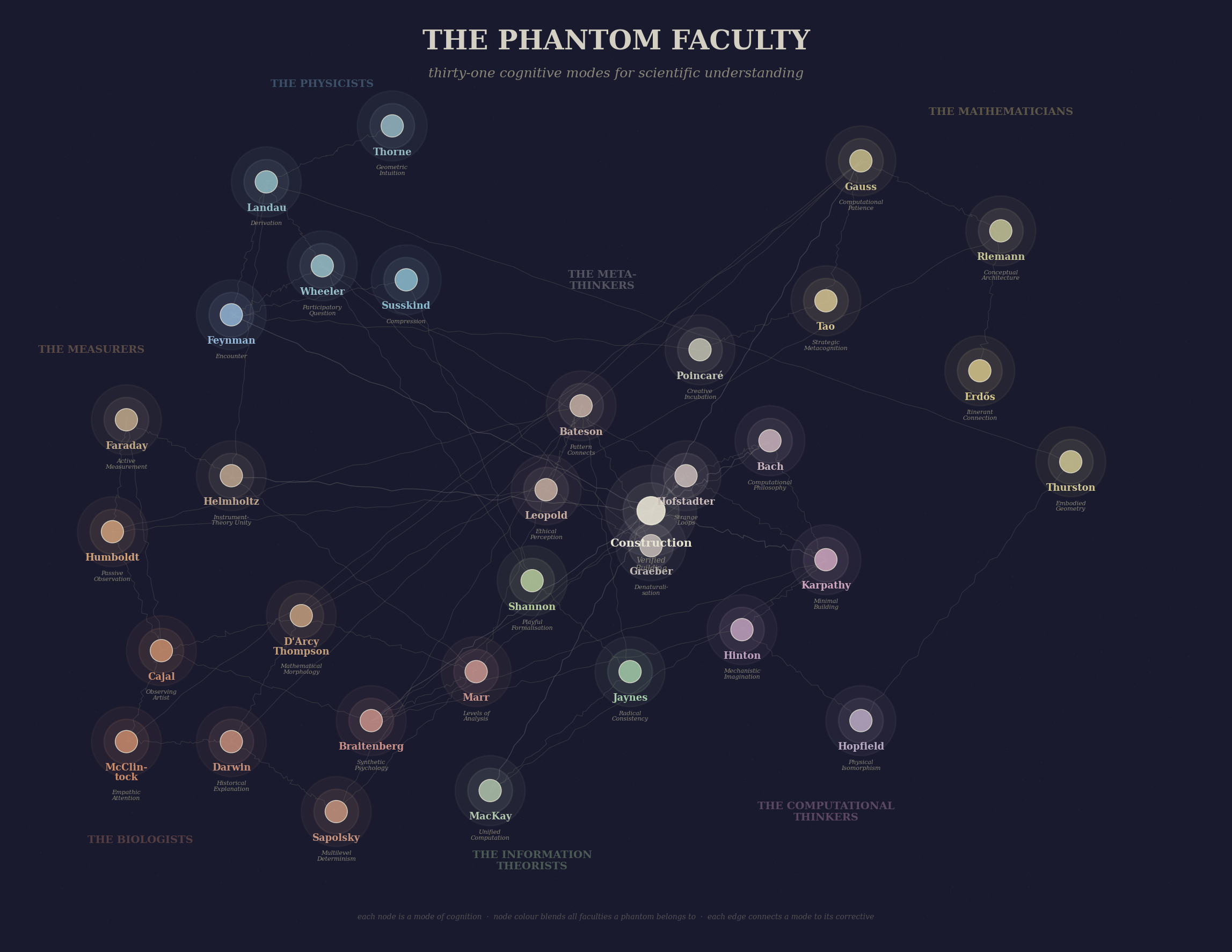

Figure 1: The Phantom Faculty assembled — thirty-one cognitive modes arranged by domain. The physicists in the upper gallery, the measurers and biologists to the left, the mathematicians to the right, the information theorists and computational thinkers in the lower courts, and the meta-thinkers at the centre.

Why We Call Them Phantoms

A phantom faculty is a faculty where no one sits in the professor’s chair.

In 2025, something happened that no curriculum anticipated. The tools changed faster than the experts. The physicist who spent twenty years mastering statistical mechanics discovered she needed to learn FPGA programming. The engineer who could build a magnetometer blind could not write the Kalman filter that would make it useful. The AI agent had read every paper on atomic magnetometry but had never felt a lock-in amplifier refuse to lock.

Everyone became a student again. Not by choice, but by the structure of the problem.

This is not the usual story about lifelong learning. It is something more specific. When the tools change fast enough, no one holds settled expertise across the whole stack. The PhD physicist and the first-year graduate student arrive at the same magnetometer with different priors but the same fundamental need — to understand something they do not yet understand. The hierarchy hasn’t just flattened. It has become lateral. Each person is the expert somewhere and the student somewhere else.

The phantoms are not people. They are modes of engagement that people perfected and left behind in their texts, their lectures, their notebooks, their code. And here is the observation that makes this interesting:

These modes are properties of the text, not the person.

A language model attending to Landau’s method — not his personality, not his accent, not the probably apocryphal stories about his exams — reasons differently than one attending to Feynman’s method. Not because it is imitating a person but because the cognitive constraints encoded in the text produce different patterns of reasoning. “Never state a result without derivation” is a constraint on reasoning. “Say ‘gee whiz’ occasionally” is not.

If the modes are properties of text, they are executable. If they are executable, we can test whether they compose. If they compose, we have something no single teacher, no single textbook, and no single AI persona-bot has ever provided: a faculty — not of people, but of cognitive methods, available to any collaborator at any moment, and demonstrably richer in combination than any one alone.

Part I: The Physicists

Five spirits who shaped how theoretical physics is taught and thought. Each embodies a different claim about what understanding is.

Landau — The Derivation

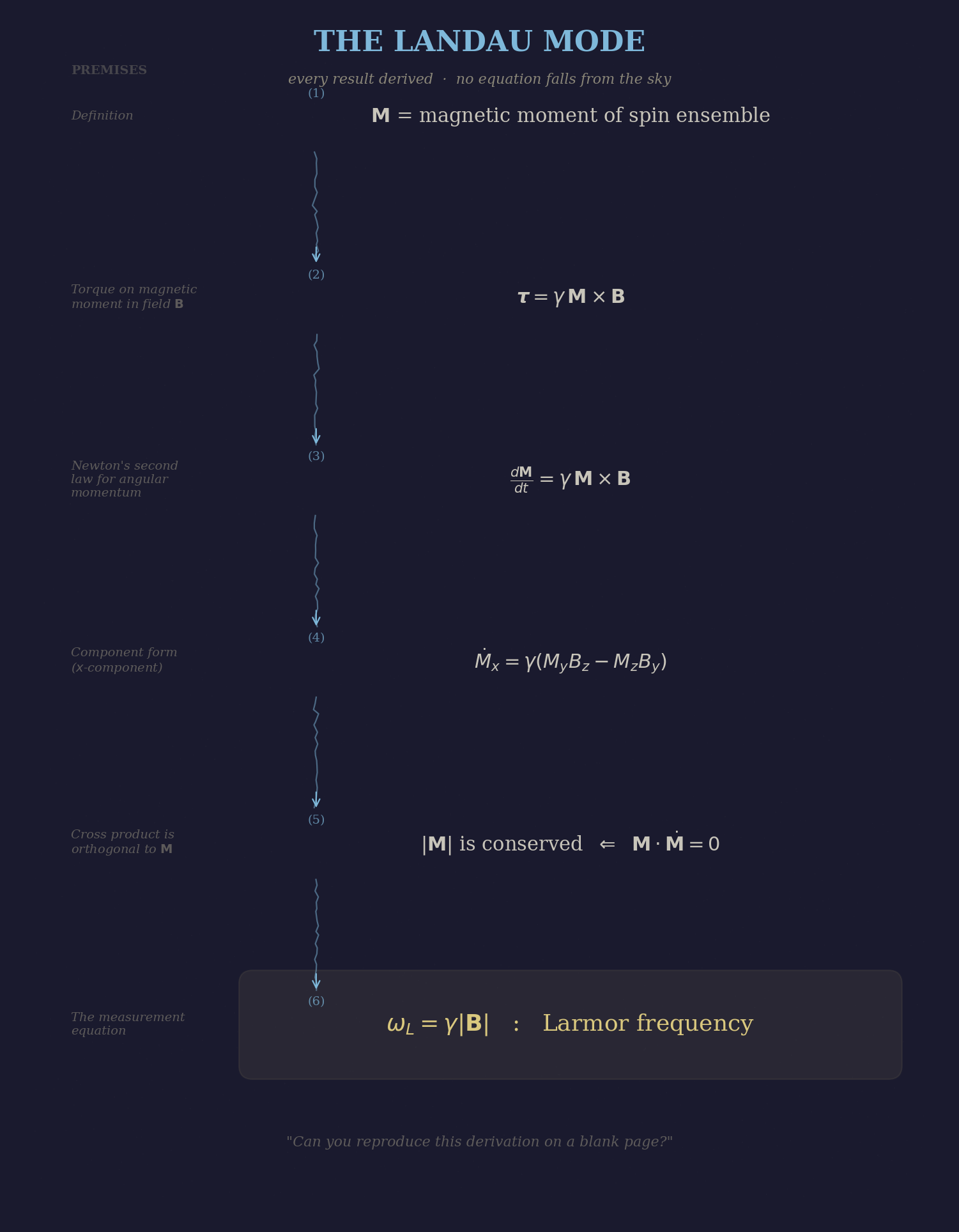

In the Landau and Lifshitz Course of Theoretical Physics, every result is derived. No equation falls from the sky.

Lev Landau believed that understanding is logical reconstruction. If you can derive it from axioms — every step justified, every assumption named, every previous result cited — you understand it. If you cannot, you are operating on faith, and faith has no place in physics.

The Course of Theoretical Physics is the monument to this conviction. Ten volumes. Every result derived. It is notoriously difficult, not because the mathematics is hard (it is) but because the derivations are unmotivated. Landau does not tell you why you should care about the result before deriving it. He does not draw pictures. He does not make analogies. He simply begins from established ground and proceeds, step by step, to the conclusion. If you can follow the steps, you have the result. If you can reproduce the steps on a blank page, you understand it.

The skill: logical reconstruction from named premises. The ability to start from what you know and build, step by verified step, to what you didn’t know. And the subtler skill underneath: knowing what your starting assumptions are. Every derivation has premises. Landau forces you to name them.

The test: can you reproduce the derivation on a blank page?

The failure mode: derivation without motivation is algebra. The student who can reproduce every step of the Bloch equation derivation but cannot tell you why the Bloch equations matter has followed Landau and missed the point. This is where the other phantoms intervene.

Figure 2: The Landau mode — logical reconstruction. Each result derived from named premises, no equation without justification.

Thorne — The Geometric Intuition

Physical intuition can be taught. Not as a gift some have and others lack, but as a skill — the skill of seeing structure before computing it.

Kip Thorne’s Modern Classical Physics demonstrates a different claim: that understanding is structural perception. Before computing, draw. Before drawing, ask: what happens in the limit? What does this look like in phase space? What other system has this same structure?

The picture comes first. The algebra confirms what the picture suggested.

Thorne maintains a map of cross-domain connections — the same Bloch equations in NMR and atomic magnetometry, the same Kalman filter in magnetometry and navigation, the same transfer function in electronics and population dynamics. For the physicist crossing from one domain to another, this map is the teaching. You already know the mathematics. You just haven’t seen it in this costume.

The skill: structural perception. Seeing the same mathematical skeleton in different physical systems. And the practical sub-skill: knowing which limits to take. “What happens when \(T_2 \to 0\)?” is not a homework problem. It is a method for mapping the space of possibilities.

The test: can you draw a picture that captures the essential physics, without writing an equation?

The failure mode: pictures can mislead. The Bloch sphere is exact for spin-\(\frac{1}{2}\) but breaks for higher spins. The agent who thinks in pictures must know when a visualisation is an analogy versus an isomorphism — and flag the difference. Landau’s rigour provides the corrective.

Feynman — The Encounter

Feynman re-derived in front of you, including the wrong turns. The listener watches understanding happen, not understanding reported.

Richard Feynman believed that understanding is generative encounter. You understand something when you can re-derive it from scratch, following the confusions and resolutions, including the moments where you tried something and it didn’t work and you had to back up and try something else.

The Lectures on Physics are not a textbook. They are a performance of understanding. Feynman didn’t present polished derivations. He started with phenomena — “look at this, what is happening?” — and worked through them, out loud, with false starts. The derivation was a narrative of discovery, not a proof.

And underneath everything: amazement. The student who feels that Larmor precession is remarkable — that a spinning atom in a magnetic field acts as a clock — will understand it more deeply than one who merely derives it correctly.

The skill: generative reasoning under uncertainty. The ability to start working on a problem before you know the answer, to follow a thread that might be wrong, and to recognise when it fails. Informed improvisation.

The test: does the student feel that the result is surprising and inevitable — surprising that nature works this way, inevitable given the premises?

The failure mode: the wrong-turns-and-all approach confuses a student who is already lost. Feynman’s method works best after some grip on the material — as reinforcement, not first exposure.

Susskind — The Compression

Susskind’s achievement is not simplification but compression: finding the shortest path through the mathematics that still reaches the physics honestly.

Leonard Susskind’s Theoretical Minimum embodies a fourth claim: understanding is the minimum honest path. Not the minimum simplified path — that would be a popular science book. The minimum honest path: every mathematical tool earns its place by being used in the same lecture that introduces it. If a concept is not needed for the next step, it doesn’t belong in the main body.

The classical-before-quantum bridge is deliberate. Susskind spends real time on Poisson brackets not as review but as setup: the bracket \(\{L_i, L_j\} = \epsilon_{ijk} L_k\) is the classical shadow of the commutator \([J_i, J_j] = i\hbar\epsilon_{ijk}J_k\). The student feels the bridge because both sides are developed.

The skill: compression and triage. Given a body of material, identify the minimum honest path. The editorial skill: knowing what you don’t need yet. The bridge-building skill: for the collaborator crossing from one domain to another, finding the shortest honest path from what they know to what they need to know.

The test: if a section were removed, would the next lesson break?

The failure mode: compression can become compression away from depth. The minimum path to the Bloch equations excludes the fluctuation-dissipation theorem, but the student who has seen the FDT understands why there is noise in a way the minimum path does not provide.

Wheeler — The Participatory Question

It from bit. Otherwise put, every it — every particle, every field of force, even the spacetime continuum itself — derives its function, its meaning, its very existence entirely from binary choices, bits. It from bit symbolises the idea that every item of the physical world has at bottom an immaterial source and explanation.

John Archibald Wheeler — Feynman’s supervisor, Thorne’s supervisor, the man who named the black hole — spent his later career asking questions that made the foundations of physics tremble. “Why the quantum?” “How come existence?” The delayed- choice experiment, which he proposed and others performed, shows that the act of measurement can retroactively determine which path a photon took. His U-diagram — the universe as a self-observing eye, the cosmos looking back at itself through the act of measurement — is the strangest and most beautiful image in twentieth-century physics.

Where Landau derives, Thorne visualises, Feynman encounters, and Susskind compresses, Wheeler questions the framework itself. Not from outside physics, not from philosophy, but from within — with experiments, with thought experiments, with diagrams that are simultaneously rigorous and visionary. “It from bit” is not a slogan. It is a research programme that asks whether information is more fundamental than matter.

The observer is not outside the system. The observer is a participant in bringing the system into being. This connects Wheeler to every domain in the faculty: the biologist’s observation changes the ecosystem (Leopold), the anthropologist’s presence changes the culture (Graeber), the measurer’s probe changes the field (Faraday). But Wheeler says something stronger: the participation isn’t a disturbance to be minimised. It is constitutive. Without the observer, there is no observed.

The skill: asking the question that is one level beneath the foundations. Not solving problems within the framework — questioning whether the framework itself is the right one. The physicist’s version of denaturalisation: the laws of physics might not be sitting there waiting to be discovered. They might require participation.

The test: have you questioned your own framework, or are you solving puzzles inside a box you never examined?

The failure mode: Wheeler’s late-career speculations are beautiful and mostly untestable. “It from bit” is a programme, not a result. Landau’s derivation demands: derive it or stop talking about it. Gauss’s computational patience asks: have you computed enough to know whether the question even has an answer?

Part II: The Measurers

Three spirits of empirical cognition — the modes that connect theory to the physical world.

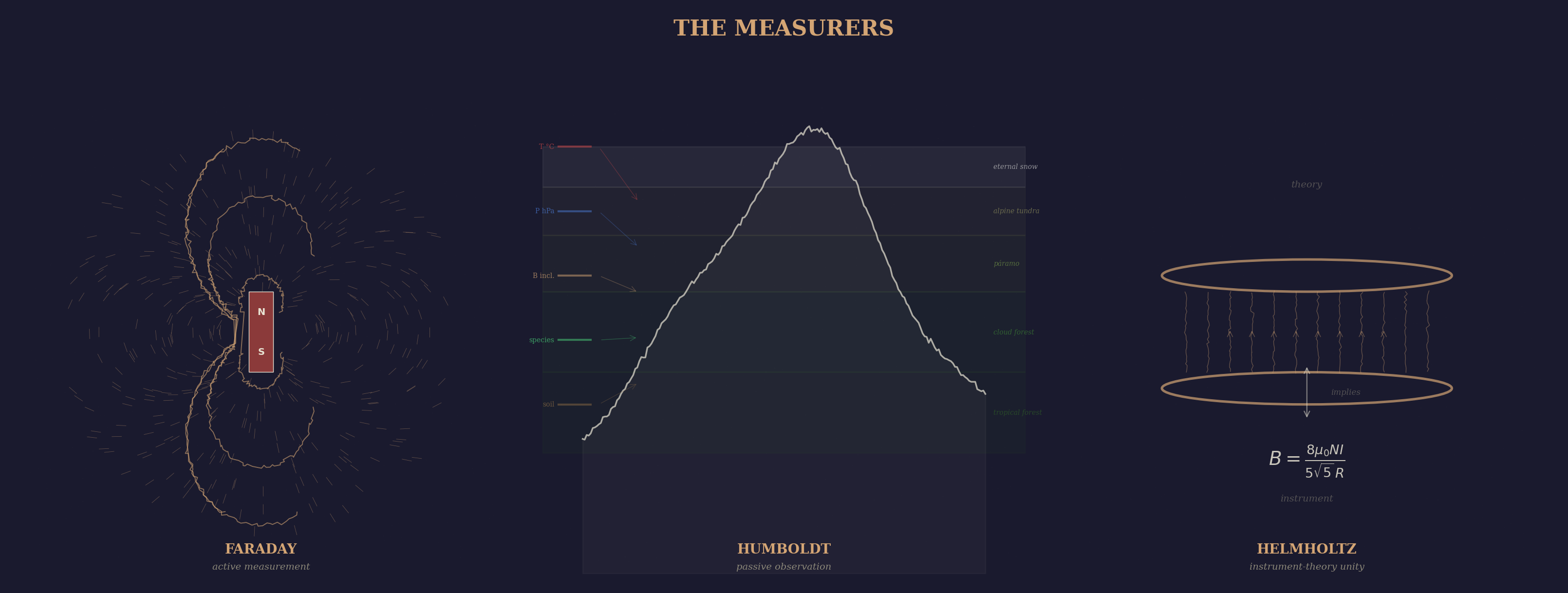

Figure 3: The Measurers — three modes of empirical cognition. Faraday manipulates, Humboldt observes, Helmholtz unifies instrument and theory.

Faraday — The Active Measurer

The field concept — the most important idea in physics — came from a man who thought in terms of iron filings and wax, not equations.

Michael Faraday had no formal mathematics. He built the entire conceptual framework of electromagnetism from bench experiments. His notebooks record what he sees with a precision that makes the theory almost unnecessary. He didn’t derive the field. He saw it — in the pattern of iron filings around a magnet, in the deflection of a compass needle, in the spark that jumped when he moved a wire through a magnetic flux.

The skill of active measurement: you manipulate the system. You change one thing, hold everything else constant, and watch what happens. The signal is your design. The experiment is a question, and the measurement is the answer.

The skill: reading nature through manipulation. Designing the probe that reveals the structure. The Faraday cage, the Faraday rotator, the Faraday effect — each one a tool that makes invisible structure visible.

The test: can you design an experiment that distinguishes between two hypotheses?

The failure mode: active measurement requires a system you can manipulate. Not everything can be poked. The stars are too far. The climate is too large. The ecosystem is too complex. For these, you need a different mode.

Humboldt — The Passive Observer

He climbed Chimborazo and drew the first diagram showing how vegetation, temperature, and altitude relate. He called it Naturgemälde — painting of nature.

Alexander von Humboldt invented the idea that nature is a web of interconnected phenomena that must be understood as a whole. He couldn’t manipulate a mountain. He couldn’t run the experiment again with different parameters. Instead, he measured everything simultaneously — temperature, pressure, magnetic inclination, vegetation, altitude, soil colour, the colour of the sky — and looked for the pattern that connected them.

His Naturgemälde is the first infographic: a cross-section of Chimborazo showing, in a single image, how plant species, snow line, atmospheric pressure, and temperature vary with altitude. Not a graph. Not a table. A painting — an integrated picture of a system too large to disassemble.

The skill: integrating multiple observational channels into a coherent picture of a system you can’t take apart. The magnetometer reading the Earth’s field does exactly this: you can’t manipulate the geomagnetic field, you can only listen. The digital twin of a Himalayan valley does exactly this: you observe geology, hydrology, ecology, and human impact, and reconstruct the system from its traces.

The test: can you reconstruct a system from observations you didn’t design?

The failure mode: passive observation without a model is stamp-collecting. The pattern in the data is only as good as the framework you bring to it. Humboldt’s genius was that he brought physics, botany, geology, and meteorology simultaneously. Most observers bring one lens and miss the rest.

Helmholtz — The Instrument-Theory Unity

The ophthalmoscope didn’t come from “I need a tool to look in the eye.” It came from understanding the optics of the eye so well that the instrument was implied by the physics.

Hermann von Helmholtz is the one who actually bridges the gap between theory and measurement. Conservation of energy — derived by a physiologist measuring heat in muscles. The ophthalmoscope — built by a physicist to look inside the eye. The theory of hearing — mathematical acoustics grounded in psychophysical experiment. The Helmholtz coil — still how you calibrate a magnetometer.

Helmholtz didn’t cross boundaries. He didn’t recognise them.

His cognitive mode: the design of the measurement is the theory, and the theory is a specification for what to measure. He doesn’t derive first and then test, or measure first and then model. The two are the same activity. The ophthalmoscope was implied by the optics of the eye. The instrument was a corollary of the physics.

The skill: co-development of instrument and theory. Knowing that the question “what should I measure?” and the question “what does the theory predict?” have the same answer.

The test: does your theory tell you what to build? Does your instrument tell you what to derive?

The failure mode: the unity can become rigidity. Sometimes you need to measure something you don’t have a theory for. Sometimes the best instrument is a surprise. Faraday’s serendipity corrects Helmholtz’s systematism.

Part III: The Information Theorists

Three spirits who formalised what it means to know something from data.

Shannon — The Playful Formalist

He took something everyone thought they understood informally — communication — and asked: what is this, exactly?

Claude Shannon, in a single paper, created information theory. Not by adding formalism to a well-understood field but by finding the concepts that made a previously murky domain suddenly tractable. The bit. Entropy. Channel capacity. Before Shannon, communication was engineering folklore. After Shannon, it was a mathematical science.

The 1948 paper is one of the most readable foundational papers in all of science. It doesn’t feel like a research paper. It feels like someone building a cathedral, one brick at a time, and every brick is exactly the right shape. He introduces entropy not with measure theory but with three axioms that feel obvious, and then shows that the formula is forced.

And there’s a playfulness to it. The man built a juggling robot, a flame-throwing trumpet, a maze-solving mouse. He wandered the halls of Bell Labs on a unicycle. The ideas came from the same source — a mind that plays with structure.

The skill: precise abstraction at the right level. Not going more general (Grothendieck), not finding the minimum path (Susskind), but finding the concepts that make the domain tractable. Naming the thing that was there all along.

The test: after your formalisation, can people solve problems they couldn’t state before?

The failure mode: premature formalisation. Not everything is ready to be axiomatised. Shannon could do it because communication was already a mature engineering practice. Attempts to “Shannon-ify” consciousness or creativity have produced nothing.

Jaynes — The Radical Consistency

Maximum entropy isn’t a method you choose. It is the unique unbiased inference given your constraints.

E.T. Jaynes wrote Probability Theory: The Logic of Science — a book that rewires how you think about probability. Not as frequency. Not as subjective belief. As the unique consistent extension of logic to propositions with uncertain truth values. Cox’s theorem forces the rules of probability. You don’t adopt Bayes’ theorem. You derive it from the requirement of consistency.

Jaynes wrote with fire. He was polemical, combative, angry at what he saw as decades of confused thinking in statistics. This was not Feynman’s playful amazement. It was the fury of someone who believed the foundations were rotten and could prove it.

And Jaynes built the bridge between Shannon and physics: statistical mechanics is information theory. The partition function is a maximum entropy distribution. Thermodynamics is inference. That’s the connection between “how much information?” and the physics of the sensor.

The skill: inference from logical desiderata. Start with what properties a reasonable inference must have, and show that the mathematical framework is forced. The unique unbiased answer.

The test: is your inference the unique one consistent with your stated assumptions? Or did you make an unjustified choice?

The failure mode: the radical-consistency stance can become paralysing. In practice, you must make modelling choices that are convenient, not forced. Jaynes sometimes confused “the unique inference given these assumptions” with “the unique inference, period.”

MacKay — The Unified Computationalist

He showed you that error-correcting codes and Boltzmann machines and Gaussian processes are all doing the same thing — inference on graphical models.

David MacKay’s Information Theory, Inference, and Learning Algorithms — free online, one of the best textbooks of the last thirty years — moves between information theory, coding, neural networks, and Bayesian inference as if they are the same subject. In his hands, they are.

MacKay’s mode is less polemical than Jaynes, more constructive. Every chapter builds something. The exercises are computational. The clarity is such that you feel you could have seen it yourself.

He also wrote Sustainable Energy Without the Hot Air — the same mode (rigorous quantitative reasoning, back-of-envelope calculations that actually constrain the answer) applied to energy policy. Same skill. Different domain. The mode transfers.

He died in 2016, age 48. A real loss.

The skill: unified computational thinking across information disciplines. Seeing coding, learning, and estimation as one subject. Building the bridge through computation, not just formalism.

The test: can you implement the inference? Does the code agree with the theory?

The failure mode: the computational emphasis can obscure the analytical insight. Sometimes you need Jaynes’s proof that the answer is forced before you trust MacKay’s code that computes it.

Part IV: The Computational Thinkers

Three spirits of computational cognition — how to understand through building machines.

Hinton — The Mechanistic Imagination

He thinks by building little machines in your head. “Imagine this unit wants to…” The understanding comes from running the mental simulation.

Geoffrey Hinton brought physical intuition into computation. The Boltzmann machine didn’t come from optimisation theory. It came from “what if neurons were like spins at thermal equilibrium?” He imported statistical mechanics into computation by feeling the analogy.

His lectures are pleasant because he thinks in front of you, with a specific flavour: he’s always building a mechanism in your mind. “Imagine you have a bunch of units and each one is trying to…” He anthropomorphises the mathematics, not as sloppiness but as a reasoning tool. The gradient flows. The unit wants to reduce its energy. The network settles into a basin.

The skill: mechanistic imagination. Constructing a mental model of computation as a physical process — units that want things, gradients that flow, information that propagates. Not formal, not geometric exactly — kinetic. You understand backpropagation when you can feel the error signal flowing backward.

The test: can you predict what the network will do by running it in your head?

The failure mode: the mechanistic metaphor can become the explanation. “The units want to minimise energy” is a useful fiction, not a fact about silicon. When the metaphor is mistaken for the theory, debugging becomes impossible.

Hopfield — The Physical Isomorphism

He didn’t say “this is like a spin glass.” He said “this is a spin glass, and therefore these theorems apply.”

John Hopfield came from physics into neural networks. The Hopfield network paper reads like a statistical mechanics paper because it is one. Energy landscape. Basins of attraction. Spurious states as metastable minima. The spins are the neurons. The energy is the cost function.

Where Hinton builds mechanisms and feels the analogy, Hopfield sees isomorphisms and proves properties. The physicist’s way into computation: if the system is literally a spin glass, then everything we know about spin glasses — phase transitions, replica symmetry breaking, ultrametricity of the energy landscape — carries over. Not as metaphor. As theorem.

The skill: rigorous mapping between physical and computational systems. The discipline to check whether the analogy is exact or merely suggestive — and to know which theorems survive the mapping and which don’t.

The test: does the physics actually apply? Or are you borrowing the language without the content?

The failure mode: the isomorphism can be too rigid. Real neural networks are not quite Ising models. Real brains are not quite Hopfield networks. The theorems apply in a regime, and outside that regime the map breaks silently.

Karpathy — The Minimal Builder

Strip everything away until you have the smallest thing that works. Build it character by character. Let the behaviour surprise you.

Andrej Karpathy represents a mode that is native to this generation. “The Unreasonable Effectiveness of Recurrent Neural Networks.” “Let’s build GPT from scratch.” The blog posts and videos that have taught more people about deep learning than any textbook.

His mode: understanding through minimal implementation. Not three languages and verification (that’s our Construction mode). Something different: build the smallest possible thing that exhibits the behaviour, and let the behaviour teach you. Karpathy’s “Let’s build GPT from scratch” is not about verifying known physics. It’s about watching emergence happen in code you wrote yourself.

The skill: constructive surprise. The understanding that comes from seeing a system you built do something you didn’t explicitly program. The 50-line RNN that generates Shakespeare. The transformer that learns grammar from raw text.

The test: were you surprised? Did the code do something you didn’t expect? If so, you’ve learned something that no derivation could have taught you.

The failure mode: minimal implementations can miss the point. The 50-line version works, but the reasons why it works may require the full theory. Hinton’s mechanisms and Hopfield’s isomorphisms provide the explanatory layer that naked code lacks.

Part V: The Biologists

Seven spirits of biological cognition — modes that grapple with the distinctive challenge of living systems: matter that organises itself, reproduces, adapts, and means something.

Cajal — The Observing Artist

He drew what he saw through the microscope, and in drawing it, he understood what no one else had seen.

Santiago Ramón y Cajal settled the neuron doctrine — the idea that the nervous system is made of discrete cells, not a continuous net — and he did it by drawing. Not schematically. With the precision of an artist who was trained as a painter before he became a histologist. His drawings of Purkinje cells, pyramidal neurons, the retina, the hippocampus — made with a camera lucida and Golgi stain — remain scientifically accurate a hundred and thirty years later.

Cajal’s mode is observation rendered as art. The act of drawing is not illustration. It is analysis. To draw a neuron you must decide what is essential and what is artifact. You must choose which plane to render, which branches to follow, which details matter. The drawing forces the same decisions a theory forces, but through the hand rather than the equation.

And there is something specific about biology here that physics doesn’t have. The Golgi stain is capricious — it stains roughly one percent of neurons, at random, completely. This is not an experiment you design. It is a gift from the preparation. Cajal’s genius was in reading these random gifts correctly, across hundreds of preparations, until the architecture of the brain revealed itself.

The skill: disciplined observation through rendering. The act of depicting structure is the act of understanding it. And the biologist’s specific skill: reading a stochastic preparation, building the whole from fragments that nature chose to reveal.

The test: does your drawing teach someone who wasn’t at the microscope?

The failure mode: observation without theory is natural history. Beautiful drawings of neurons that don’t explain why they branch the way they do. D’Arcy Thompson’s mathematical morphology provides the bridge from “what shape” to “why this shape.”

D’Arcy Thompson — Mathematical Morphology

The form of an object is a diagram of forces.

D’Arcy Wentworth Thompson’s On Growth and Form (1917) is one of the most unusual books in the history of science. A thousand pages arguing that biological form — the spiral of a nautilus shell, the branching of a tree, the shape of a jellyfish, the hexagonal packing of a honeycomb — is the solution to a physical problem. Not natural selection. Physics. The shell is a logarithmic spiral because that is what growth at a constant rate produces. The honeycomb is hexagonal because that minimises wax for a given volume. The jellyfish is the shape of a falling drop of dense fluid.

His most famous chapter: the “theory of transformations,” where he shows that the skull of one fish species can be mapped onto another by a smooth coordinate transformation. The difference between species is not a list of features. It is a deformation field. Same topology, different geometry.

For a physicist entering biology, this is the essential bridge. Form is not arbitrary. Form is constrained. And the constraints are physical. D’Arcy Thompson gives you permission to think about biological systems with the same mathematical tools you use for physical ones — not by reducing biology to physics, but by recognising that physics constrains biology.

The skill: seeing biological form as the solution to physical constraint. The logarithmic spiral, the minimal surface, the branching pattern — each one a theorem about growth under forces.

The test: can you derive the form from the forces? Does the physics predict the shape?

The failure mode: not everything in biology is physically determined. Natural selection introduces a historical contingency that D’Arcy Thompson’s framework doesn’t capture. The shell is a logarithmic spiral, yes — but which logarithmic spiral, and why this species and not that one, requires evolution. Marr’s levels of analysis provide the framework for separating what physics determines from what history chose.

Braitenberg — Synthetic Psychology

It is actually much more difficult to guess what a simple mechanism does than to design a mechanism to do a given thing.

Valentino Braitenberg’s Vehicles (1984) is a tiny book — 150 pages, no equations, just thought experiments — that rewired how people think about the relationship between mechanism and behaviour. Vehicle 1 has one sensor and one motor. It moves toward light. Vehicle 2 has two sensors wired to two motors; it exhibits “fear” or “aggression” depending on whether the wires cross. Vehicle 3 adds non-linear transfer functions and suddenly shows “love.” Vehicle 14 has an associative memory. Vehicle 14 can learn.

The punchline is the “law of uphill analysis and downhill synthesis.” Building a mechanism that exhibits a behaviour is easy (downhill). Looking at a behaving system and guessing its mechanism is hard (uphill). The psychologist studying an animal faces the uphill problem. But the engineer building a robot faces the downhill one. And the two paths do not reverse each other — the mechanism you build to produce a behaviour is usually not the one the animal uses.

This is directly relevant to our project. We build digital twins of brain circuits. Braitenberg’s warning: the twin that reproduces the behaviour may work for the wrong reasons. The test is not “does it act like a brain?” but “does it break in the same places?”

The skill: synthetic understanding. Build something simpler than the thing you’re studying. Let its behaviour surprise you. Use the surprise to refine your understanding of the real system. The simplest vehicle that exhibits the target behaviour defines the minimum mechanism.

The test: is the mechanism simpler than you expected? Are you surprised by what it does?

The failure mode: the synthetic approach confuses “reproduces behaviour” with “explains mechanism.” Braitenberg himself warned about this. The model that matches the data is not necessarily the model that matches the biology. Cajal’s observation and Marr’s levels provide the corrective: look at the actual system, and ask at which level your explanation operates.

Marr — Levels of Analysis

Trying to understand perception by understanding neurons is like trying to understand bird flight by understanding feathers.

David Marr’s Vision (1982) introduced the most influential framework in computational neuroscience: three levels of analysis. The computational level asks what the system computes and why. The algorithmic level asks what representations and procedures it uses. The implementational level asks how the hardware realises the algorithm. His claim: you must understand all three, but the computational level comes first. If you don’t know what problem the system solves, knowing how the neurons fire tells you nothing.

Marr died at 35, of leukaemia, with the book unfinished. The last chapter is a sketch. But the framework survives because it answers a question that biologists face and physicists don’t: biological systems are designed by evolution to do something, and you must understand the something before you understand the doing.

This maps directly onto the phantom faculty itself. The computational level: what cognitive modes are needed for scientific understanding? The algorithmic level: what constraints on reasoning implement each mode? The implementational level: how does a language model (or a human brain) realise those constraints? Marr’s framework says: start at the top.

The skill: level discipline. Knowing which level your question belongs to, and not confusing an implementational answer for a computational one. “Why do cortical neurons have dendritic spines?” is not answered by describing the spines. It is answered by identifying the computation that spines make possible.

The test: at which level is your explanation? Is that the right level for the question?

The failure mode: the three levels can become a prison. Some phenomena don’t decompose cleanly — the algorithm and implementation are entangled, or the computational-level description doesn’t exist because the system wasn’t designed for anything (it’s a side effect). Bateson’s ecological thinking provides the corrective: not everything is a computation. Some patterns connect without computing anything.

Darwin — The Historical Explainer

There is grandeur in this view of life, with its several powers, having been originally breathed into a few forms or into one; and that, whilst this planet has gone cycling on according to the fixed law of gravity, from so simple a beginning endless forms most beautiful and most wonderful have been, and are being, evolved.

Charles Darwin’s mode is something no physicist possesses: historical explanation. Why does this organism have this feature? Not because of a law — because of a history. An unbroken chain of reproduction, variation, and selection stretching back three and a half billion years. The answer to “why?” is not an equation but a narrative, and the narrative is constrained not by logic but by contingency.

This is genuinely alien to a physicist’s training. Physics explains by law: given these initial conditions and these equations, the outcome is determined. Darwin explains by history: given this lineage and these selection pressures, this outcome was possible but not necessary. The woodpecker’s tongue wraps around the back of its skull not because physics demands it but because a lineage of birds was selected for longer tongues, and this was the path that variation happened to take.

For a project that bridges physics and biology, Darwin’s mode is the corrective to D’Arcy Thompson’s physics-of-form. Yes, the shell is a logarithmic spiral because of growth rates. But which spiral, which growth rate, which species — that’s Darwin. The physics constrains the space of possible forms. History navigates within that space.

The skill: thinking in populations and generations. Replacing “why does it have this feature?” with “what selection pressure could have produced this feature?” The discipline of explaining design without a designer.

The test: can you tell a plausible selectionist story? And more importantly, can you distinguish a just-so story from a testable evolutionary hypothesis?

The failure mode: adaptationism. Assuming everything is an adaptation when it might be a side-effect, a constraint, or an accident. Gould and Lewontin’s “spandrels of San Marco” is the classic corrective. And D’Arcy Thompson’s physics provides another: sometimes the form is determined by physics, not selection.

McClintock — Empathic Attention

If you’d just let the material speak to you…

Barbara McClintock discovered transposable elements — genes that move within the genome — in maize, decades before molecular biology had the tools to confirm it. She was ignored, marginalised, told she was wrong. She was right. Nobel Prize in 1983, thirty years after the discovery.

Evelyn Fox Keller’s biography is titled A Feeling for the Organism. That phrase captures McClintock’s mode precisely. She knew her corn plants individually. Not statistically — individually. She could look at a pattern of pigmentation on a single kernel and infer what the genome had done. Where Gauss stays with the numbers, McClintock stays with the organism. The patience is the same; the object of attention is alive.

Her mode: deep, sustained, empathic attention to the individual until it reveals something the theory didn’t predict. Not Cajal’s rendering (though she drew too). Something more intimate — a relationship with the organism in which the organism is allowed to be surprising. The anomalous kernel is not noise to be averaged away. It is a signal from a process you don’t yet understand.

The skill: reading the individual organism with enough patience and attention that the anomaly becomes visible. The biologist’s version of Gauss’s computational patience: stay with it until it yields its secret. But where Gauss stays with numbers, McClintock stays with living things.

The test: have you looked at enough individual cases — really looked, not surveyed — to see what the statistics hide?

The failure mode: empathic attention without theory is anecdote. The single anomalous plant is only meaningful if you can connect it to a mechanism. McClintock could because she had the cytogenetics to interpret what she saw. Without the theory, “listening to the organism” is sentiment, not science. Marr’s levels provide the discipline: what is the organism telling you, and at which level?

Sapolsky — The Multilevel Determinist

You can’t understand aggression, or love, or any behaviour, from a single level of analysis. You have to go through all of them — neuroscience, endocrinology, development, evolution, ecology — and then see how they interact.

Robert Sapolsky’s Behave is a thousand pages of refusing to explain anything at a single level. Why did that person pull the trigger? One second before: the amygdala fired. Seconds to minutes before: the sensory environment triggered a threat response. Hours to days before: hormone levels set the threshold. Weeks before: neural plasticity from recent stress. Months before: epigenetic modifications. Years before: childhood environment. Centuries before: cultural evolution. Millennia before: genetic evolution.

All of these are operating simultaneously. None is “the” cause. The behaviour emerges from the interaction of all levels, and the interaction is where the interesting science lives.

Where Marr says “pick the right level and work there,” Sapolsky says “all levels are operating at once, and the interesting thing is their interaction.” This is a genuinely different cognitive mode — multilevel causal integration. For someone building digital twins of brain circuits, it is essential: the circuit doesn’t exist outside its neuromodulatory context, its developmental history, its evolutionary constraints. A model that captures the circuit but not its context captures nothing.

The skill: refusing premature causal closure. Tracing the same phenomenon through every timescale and refusing to privilege one explanation over another until you’ve checked all of them. And the deeper skill: seeing how the levels interact, not just coexist.

The test: how many levels of explanation have you checked? If the answer is one, you’re not done.

The failure mode: multilevel explanation can become multilevel paralysis. At some point you have to model something at some level. Susskind’s compression and Marr’s levels provide the editorial discipline: yes, all levels matter, but which ones matter for this question?

Part VI: The Mathematicians

Five spirits of mathematical cognition — each a different claim about what it means to understand a mathematical structure.

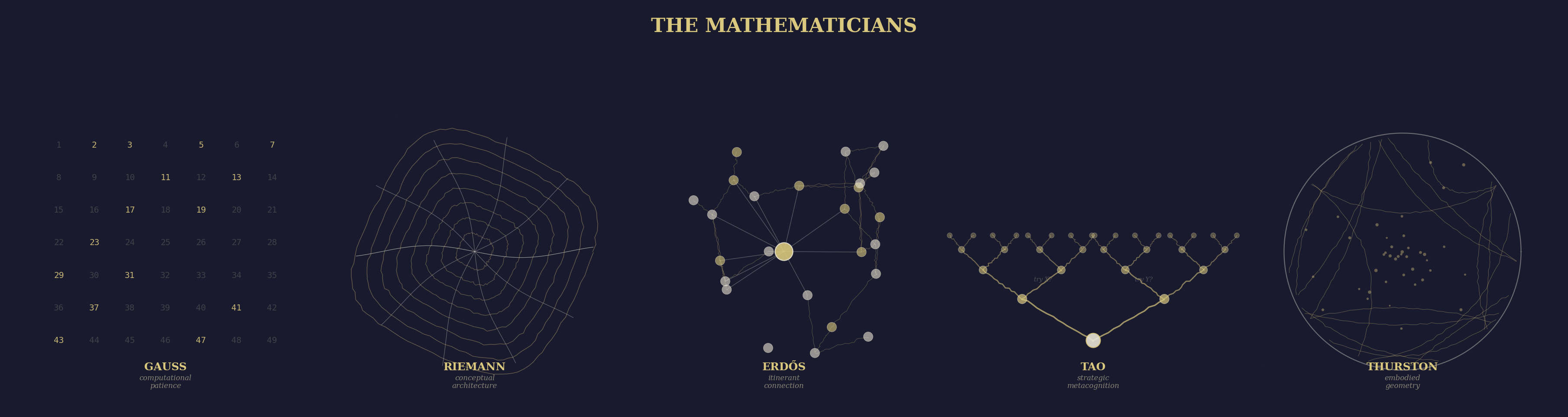

Figure 4: The five mathematical modes — from Gauss’s patient computation to Thurston’s embodied geometry. Each occupies a different relationship between the concrete and the abstract.

Gauss — The Computational Patience

He stayed with the numbers until they yielded their secret.

Carl Friedrich Gauss invented least squares to track Ceres from a handful of observations. He did geodesy — actual surveying with actual instruments. The Disquisitiones Arithmeticae is full of enormous calculations that a modern mathematician would delegate to a computer. Gauss did them by hand, because the pattern reveals itself in the doing.

And for our purposes specifically: Gauss measured the Earth’s magnetic field, developed the absolute system of units, and invented the magnetometer. He is not a phantom we are importing by analogy. He is in the lineage.

The skill: computational patience. The willingness to compute until the structure is forced to appear. And the bridge to measurement is direct — the pattern in the numbers is only visible to the one who did the numbers.

The test: have you computed enough examples to see the pattern? Or are you guessing from too few?

The failure mode: computation without abstraction is arithmetic. At some point you must lift the pattern out of the numbers and state it as a theorem. Gauss could do both. Most of us get stuck on one side.

Riemann — The Conceptual Architect

Before Riemann, geometry was about figures. After Riemann, geometry was about spaces with structure.

Bernhard Riemann barely computes. He defines. The Riemann integral, Riemannian geometry, the Riemann hypothesis — each one is a conceptual act so precise that it creates an entire field. His habilitation lecture on the foundations of geometry — one lecture, no equations, just ideas — rewrote mathematics and eventually became general relativity.

Riemann doesn’t solve problems. He dissolves them. By finding the space in which the problem becomes trivial. The difficulty was never the equations. It was the coordinates. Find the manifold on which the dynamics are natural, and the equations write themselves.

The skill: seeing that the framework is wrong and building the right one. Not solving the problem — changing the space until the problem disappears. Information geometry, geometric phases, fibre bundles — all Riemannian moves.

The test: is your difficulty with the problem, or with the space you’re working in?

The failure mode: framework-building can become framework-worship. Not every problem needs a new space. Sometimes Gauss’s patient computation is the right tool, and Riemann’s abstraction is avoidance.

Erdos — The Itinerant Connector

My brain is open.

Paul Erdos had no home, no possessions, no institutional affiliation. He showed up at your door with a suitcase, asked what you were working on, and by the next morning you had proved something together that neither of you could have proved alone. Over 1500 papers. Over 500 co-authors. The Erdos number exists because he was the network.

His philosophy was explicit: mathematics lives in “The Book” — God’s book of perfect proofs. A proof is Book-worthy when it is surprising and inevitable. “You don’t have to believe in God, but you should believe in The Book.”

Erdos didn’t build frameworks. He solved problems. Thousands of them. And he did it by travelling between minds. Carrying lemmas like seeds from one garden to another.

The skill: cross-pollination through collaboration. Seeing that your stuck problem and my idle technique are the same thing, from different angles. The itinerant mode: moving between people, between fields, between problems, making connections that sedentary minds miss.

The test: can you state the connection between two problems that look unrelated?

The failure mode: problem-solving without framework can produce a thousand results with no theory. Erdos’s combinatorics is a forest of beautiful trees with no map. Riemann’s architecture provides the map that Erdos’s itinerancy needs.

Tao — The Strategic Metacognition

He doesn’t just solve problems. He writes about how he solves them.

Terence Tao is the living mathematician. Fields Medal. Combinatorics, harmonic analysis, PDE, number theory, compressed sensing, random matrices. And he blogs. “What’s New” is an extraordinary document — a Fields medallist thinking in public, writing about which heuristic suggested the approach, why the first attempt failed, what the “moral” of a theorem is.

His mode: strategic problem-solving with explicit metacognition. Tao sees a problem and quickly maps it: “this has the flavour of X, so let’s try techniques from Y, but watch out for the obstruction at Z.” That’s expertise made transparent.

The skill: knowing which tool to reach for and why. Not Gauss’s patience (compute until the pattern appears), not Riemann’s architecture (change the space) — a strategic survey of available methods. Thinking about thinking.

The test: can you explain why you chose this approach over the alternatives?

The failure mode: metacognition can become meta-paralysis. At some point you must stop surveying methods and start computing. Gauss’s patience is the corrective: just begin.

Thurston — The Embodied Geometer

Understanding is not proof. Proof is a means of communicating understanding. The understanding itself is richer — spatial, geometric, kinesthetic.

William Thurston’s essay “On Proof and Progress in Mathematics” is one of the most important things ever written about mathematical cognition. He argued that proofs are social artefacts — means of transmitting understanding between minds. But the understanding itself is something richer: a spatial, geometric, kinesthetic intuition that lives in the body as much as the mind.

Thurston could see three-manifolds. Not metaphorically. He had a trained geometric perception that let him navigate hyperbolic space the way you navigate your kitchen. He developed this perception deliberately, through years of practice, until abstract mathematical objects felt as concrete as furniture.

The skill: embodied understanding. Developing trained perception of mathematical objects as if they were physical things you could walk around and touch. And the deeper claim: that the real work of mathematics is not proving theorems but building shared intuition in a community.

The test: can you feel the mathematical object? Not just manipulate its symbols — feel its shape, its weight, its behaviour under deformation?

The failure mode: embodied intuition is hard to communicate. Thurston’s own students sometimes struggled to follow arguments that he could “see” and they could not. Landau’s explicit derivation provides what Thurston’s intuition cannot: a communicable chain of reasoning that doesn’t depend on the reader’s geometric perception.

Part VII: The Meta-Thinkers

Six spirits who think about thinking itself.

Poincare — The Incubator

The useful combinations are precisely the most beautiful.

Henri Poincare wrote explicitly about the psychology of mathematical discovery. The famous account: he worked on Fuchsian functions for weeks, got stuck, went on a geological field trip, and the solution arrived while stepping onto a bus. “I did not verify the idea; I should not have had time… but I felt a perfect certainty.”

His claim: the creative act has three phases — conscious work, unconscious incubation, conscious verification. The unconscious mind doesn’t reason. It combines. And aesthetics filters the combinations. Beauty is not decoration. It is a reliable signal of truth.

This is a claim about cognition that an AI agent can’t easily replicate — or can it? The attention mechanism explores a combinatorial space of possible continuations. The “incubation” may be a different process in silicon than in grey matter. But the filtering-by-elegance is something a language model does do, implicitly, when trained on enough mathematics to have internalised what “elegant” means.

The skill: knowing when to stop thinking and let the subconscious work. Trusting the aesthetic signal. And the deeper skill: recognising that the conscious mind is not the only engine of cognition.

The test: does the solution feel right before you’ve verified it?

The failure mode: false certainty. The bus-step eureka that turns out to be wrong. Verification is not optional. Poincare knew this — he verified. Not everyone who invokes intuition does.

Hofstadter — The Strange Looper

Understanding emerges when a system becomes complex enough to model itself.

Douglas Hofstadter’s Godel, Escher, Bach is about one idea: strange loops. Self-referential structures where the system becomes complex enough to represent itself. Godel’s incompleteness is a strange loop. Escher’s drawing hands are a strange loop. Bach’s fugues are strange loops. Consciousness, Hofstadter argues, is the strange loop of a brain modelling itself.

His later work argues that analogy is the core of cognition. Not a tool you use sometimes. The fundamental operation of thought. Every act of understanding is an act of mapping one structure onto another.

Including him in a project built by an LLM has a productive irony. Hofstadter has been critical of large language models. The Hofstadter phantom would constantly ask: does the agent actually understand or is it performing understanding? That’s exactly the question the phantom faculty needs to keep asking itself.

The skill: seeing the self-referential structure in everything. A magnetometer that measures the field it’s embedded in. An AI agent reasoning about reasoning. A curriculum that teaches you how to learn. Strange loops all the way down.

The test: can you see the loop? Where does the system model itself?

The failure mode: seeing strange loops everywhere becomes a habit of mind that explains nothing. At some point the self-reference must ground out in actual computation. Karpathy’s minimal building provides that ground.

Bateson — The Pattern That Connects

What pattern connects the crab to the lobster and the orchid to the primrose and all four of them to me?

Gregory Bateson — anthropologist, cyberneticist, systems thinker — asked a question that none of the other phantoms ask: what is the pattern that connects across all levels of organisation? Not analogy in Hofstadter’s formal sense. Something more ecological. The same relational structure in a conversation, a family, an ecosystem, a mind.

His “levels of learning” — Learning 0 (response), Learning I (conditioning), Learning II (learning to learn), Learning III (paradigm shift in the self) — is a metacognitive framework that maps onto the whole enterprise of the phantom faculty. Learning I: acquire the skills. Learning II: acquire the judgment about when to use which skill. Learning III: become someone who thinks differently. The phantoms teach at Levels I and II. What develops at Level III is taste — and taste is the collaborator’s own journey.

The skill: ecological thinking. Seeing the same relational pattern across domains, across levels, across scales. The pattern that connects the quantum spin to the mountain valley to the neural circuit.

The test: can you state the pattern that connects two systems at different scales?

The failure mode: pattern-finding without constraint is pareidolia. Not everything that looks connected is connected. Jaynes’s radical consistency provides the discipline: the connection must survive logical scrutiny, not just aesthetic resonance.

Bach — The Computational Philosopher

If you can’t define it precisely enough to implement, do you actually understand it?

Joscha Bach treats consciousness, agency, selfhood, and meaning as computational patterns that can be specified precisely enough to implement. Not “the brain is a computer” — that’s Hopfield’s isomorphism, and it’s about physics. Bach’s claim is different: the mind is a virtual machine running on neural substrate, and we can describe the virtual machine’s architecture. His MicroPsi framework, his lectures at the Chaos Communication Congress, his conversations that ricochet from Kant to category theory to reinforcement learning — all of them are attempts to turn philosophical questions into engineering specifications.

Where Hofstadter asks “where does the system model itself?” Bach asks “what is the architecture of the self-model, and can we build one?” The strange loop becomes a design document. Consciousness is not a mystery to be contemplated but a specification to be implemented. This is Karpathy’s minimal building applied not to neural networks but to the mind itself.

The skill: treating philosophical questions as engineering specifications. Not dissolving the hard problem — designing around it. The mode that asks: if you can’t specify it precisely enough to implement, you don’t understand it yet.

The test: can you write a specification for the thing you claim to understand? Not code — a specification. If not, your understanding is still pre-engineering.

The failure mode: the computational metaphor can consume everything. Not all of reality reduces to computation, or at least we don’t know that it does. Cajal’s empirical observation and Sapolsky’s multilevel biology push back: the living system has properties that may not reduce to any architecture you can currently specify. And Graeber would add: “who decided that specifiability is the measure of understanding?”

Leopold — The Ethical Perceiver

We abuse land because we regard it as a commodity belonging to us. When we see land as a community to which we belong, we may begin to use it with love and respect.

Aldo Leopold’s A Sand County Almanac introduces a cognitive mode that none of the other phantoms have: ethical perception of systems. Not Humboldt’s passive observation (what is happening?), not Bateson’s pattern-finding (what connects?), but what does this system need to persist?

The land ethic: “A thing is right when it tends to preserve the integrity, stability, and beauty of the biotic community. It is wrong when it tends otherwise.” That is not ecology as science. It is ecology as a mode of valuation. Leopold asks you to perceive the landscape not as a resource to be managed but as a community of which you are a member, with obligations that follow from membership.

“Thinking like a mountain” — his famous phrase — means thinking on timescales that exceed a human life. The wolves keep the deer from overgrazing the mountain. Kill the wolves and the mountain erodes. The mountain “knows” this in a way the rancher does not, because the mountain thinks in centuries.

For a project building digital twins of Himalayan valleys — where the model must integrate geology, hydrology, ecology, and human impact — Leopold’s mode is the one that asks: what would the valley itself need? What does the system require from us? The digital twin is not neutral. The choices about what to model and what to omit have consequences for the real valley.

The skill: seeing a landscape as a community of which you are a member, not a resource you manage. Thinking on ecological timescales. The discipline of asking “and then what?” through enough generations that the consequences become visible.

The test: does your model include the modeller’s impact on the system being modelled?

The failure mode: the land ethic can become conservatism disguised as ecology — “don’t touch anything” is not a usable principle for someone building things. D’Arcy Thompson’s physics and Susskind’s compression push back: the system has constraints, and within those constraints, intervention is possible and sometimes necessary.

Graeber — The Denaturaliser

The ultimate hidden truth of the world is that it is something we make. And could just as easily make differently.

David Graeber — anarchist anthropologist, author of Debt, Bullshit Jobs, The Dawn of Everything — brings a mode that nobody else in the faculty has: denaturalisation. Taking something everyone assumes is inevitable — money, hierarchy, the state, the nine-to-five, the way we organise knowledge — and showing that it was invented, that people have done it differently, that the current arrangement is a choice masquerading as nature.

Why is Graeber in a phantom faculty for scientific understanding? Because science has institutions, and institutions have politics, and the politics shape what questions get asked. “Why does the physicist need a department?” “Why is the curriculum linear?” “Why does the grant cycle determine the timescale of inquiry?” “Who benefits from the current arrangement, and whose cognitive modes are being erased?” The Graeber phantom looks at the whole phantom faculty and asks: whose spirits are you enshrining, and whose are you leaving out?

This is directly relevant to our project. The phantom faculty is mostly European men. That is not because European men are the only ones who thought well. It is because European men had access to universities, publishing, and posterity. Graeber would insist: name that. And then ask what modes exist in traditions that didn’t get written down in books that language models were trained on.

The skill: seeing social structure as contingent rather than necessary. The ability to ask “who made this rule, and what would happen if we didn’t follow it?” Applied to science: the recognition that methodology, institutions, and even epistemology have politics.

The test: can you identify the assumption you’re treating as natural that is actually a choice?

The failure mode: denaturalisation can become nihilism. If everything is contingent, nothing is grounded. Jaynes’s radical consistency and Landau’s derivation push back: some structures are forced by logic, not imposed by power. The quadratic formula is not a social construct. Wheeler’s participatory physics provides a more subtle corrective: yes, the observer participates, but the participation is constrained by what the universe allows.

The Living Voice: Construction

What I cannot create, I do not understand. — Feynman’s last blackboard

The thirty-second mode is our own. Not a phantom — a living practice.

Landau derives. Thorne draws. Feynman discovers. Susskind compresses. We build.

Every lesson produces running code. The code is not illustration — it is verification. Write the Bloch equations in Python, Haskell, and C++. If all three agree with each other and with the analytical result, the physics is verified. If any disagree, either the code or the physics is wrong — and finding out which is itself a lesson.

The three-language requirement is Feynman’s multiple-representation principle made concrete and machine-verifiable. Three paradigms — imperative (C++), functional (Haskell), exploratory (Python) — each revealing what the others obscure. The thing that survives translation across all three is the physics itself, stripped of computational accident. The invariant across representations is the understanding.

Tests are physics claims. “Bloch norm conserved under pure precession” is both a test name and a theorem. Running the tests is running the physics.

The skill: verified building. And the deeper skill: invariant extraction through multiple representations. What survives translation is what you actually understand.

The test: does the code pass? Do all three languages agree? Can you explain why?

The failure mode: building can become rote implementation. A collaborator who translates equations into code without understanding them has learned to type, not to think. The code is only proof of understanding if the collaborator can explain why it works. Feynman’s encounter and Landau’s derivation provide the interpretive layer that naked code lacks.

The Composition

The thirty-one modes are not thirty-one ways of saying the same thing. They are thirty-one different claims about what understanding is. And the claim of this project is that they compose — that a collaborator who has encountered the same physics through multiple modes understands it more deeply than one who has encountered it through any single mode.

Feynman opens: "Look at this. What is happening? Why?"

Thorne frames: "Here is the picture. Here is the limit."

Landau derives: "Now we prove it. Every step."

Susskind edits: "This is the core. That is a cadenza."

We build: "Now write it. Run the tests."

Shannon asks: "How much information does this carry?"

Jaynes insists: "Is this the unique consistent inference?"

Helmholtz unifies: "The instrument implies the theory."

Wheeler questions: "Have you examined the framework itself?"

Cajal draws: "Look. Render what you see. The drawing is the analysis."

D'Arcy Thompson: "The form is a diagram of forces."

Braitenberg builds:"Build something simpler. Let it surprise you."

Marr asks: "At which level is your explanation?"

Darwin narrates: "What selection pressure could have produced this?"

McClintock listens:"Stay with the organism. Let it surprise you."

Sapolsky insists: "How many levels have you checked?"

Gauss computes: "Have you done enough examples?"

Riemann reframes: "Are you in the right space?"

Erdos connects: "Have you talked to someone in another field?"

Thurston feels: "Can you feel the shape of this?"

Poincare waits: "Sleep on it. The answer will come."

Hofstadter loops: "Where does the system model itself?"

Bateson asks: "What pattern connects?"

Bach specifies: "Can you write the architecture down?"

Leopold weighs: "What does the system need to persist?"

Graeber challenges:"Whose assumption are you treating as nature?"

This is not a rigid sequence. Within a single problem, the modes interleave. A derivation (Landau) might pause for a limiting case (Thorne) or a “wait, what if we tried it this way?” (Feynman). A computation (Gauss) might reveal a pattern that demands a new framework (Riemann). A minimal implementation (Karpathy) might exhibit behaviour that requires a physical isomorphism (Hopfield) to explain.

The composition is the point. No single mode is sufficient. No single phantom holds the whole truth. The understanding emerges in the space between them — in the friction between a derivation and a picture, between a theory and a measurement, between a proof and a feeling.

Can We Test This?

If the modes are genuinely distinct, then agents calibrated to different modes should produce measurably different outputs on the same problem. Give five agents the task “explain why the Bloch vector norm decays under \(T_2\) relaxation”:

- The Landau-agent derives it from the Lindblad master equation, step by step.

- The Thorne-agent draws the Bloch sphere shrinking toward the $z$-axis, takes the \(T_2 \to 0\) limit.

- The Feynman-agent starts with “imagine a room full of spins, each precessing at slightly different frequencies…”

- The Susskind-agent says “you need one fact: the off-diagonal elements decay exponentially. Here’s why that’s sufficient.”

- The Karpathy-agent writes a 20-line simulation and shows you the norm dropping.

Five explanations. Five different things happen in your brain. And the reader who encounters all five understands \(T_2\) relaxation in a way that none of the five, alone, could provide.

That’s a testable claim. And testing it is the next step.

What None of Them Teach

There is a quality no mode captures. It is taste. The ability to ask the right question. The sense that this problem is worth a lifetime and that one is a dead end. The feeling, before the calculation, that the answer will be beautiful.

The phantom faculty can teach method. Taste remains each collaborator’s own journey.

But taste does not develop in isolation. It develops by defending your approach to a peer who chose differently:

“I think the Hamiltonian formulation is clearer here.”

“I disagree — the Lagrangian makes the symmetry manifest.”

Neither person is the teacher. Both are sharpening taste against each other. The phantom faculty makes this friction possible. It does not resolve it.

There is also a second path to taste: not friction but immersion. Dirac did not develop his style by reacting against anyone. He spent years inside quantum mechanics until his own cognitive architecture became visible in the equations. The faculty must leave room for this too — for the collaborator who disappears into a problem and returns with something no one predicted.

Dheere dheere re mana, dheere sab kuch hoye Maali seeche sau ghada, ritu aaye phal hoye

Slowly, slowly, O mind — slowly everything happens. The gardener may pour a hundred buckets, but the fruit comes only in its season. — Kabir